|

| ▲ An image related to ChatGPT (Source: Pixabay) |

Since the release of ChatGPT, there has been a significant increase in the use of AI worldwide. As a result, fierce technological competition among companies in the AI sector is gradually intensifying. Beyond the existing function of providing text- based answers to users’ questions, the development of AI now includes the ability to emulate human- like personalities and handle various data inputs and outputs, such as voices, images, and videos.

On September 25, OpenAI, the developer of ChatGPT, updated a post on its website titled, "ChatGPT can now see, hear, and speak." OpenAI expects that by utilizing ChatGPT to interact with users through voice and images in their daily lives, they can offer more intuitive interfaces, leading to new types of interfaces. For example, if you're fixing a bike, you can ask ChatGPT directly for bike repair instructions and receive immediate voice responses, or you can ask ChatGPT to read you a bedtime story while you’re lying in bed. ChatGPT even provides five different personas with names like, 'Juniper,' 'Breeze,' and 'Amber,' allowing users to choose the AI's personality and voice for customized conversations. Additionally, ChatGPT includes the ability to recognize images and respond based on them. If a user uploads a photo of a math problem, ChatGPT can recognize it and explain the answer in voice. Similarly, when traveling, users can take photos and share them with ChatGPT, engaging in real-time conversations as if chatting with a friend about interesting things regarding the location. These new features of ChatGPT will initially be released to people who subscribe to OpenAI's paid service, 'GPT Plus.'

With the addition of new functions to ChatGPT, which allow for more human-like conversations, there are assessments that big tech companies like Amazon, Apple, and Samsung, which have been providing voice AI services, are becoming increasingly concerned. On the same day that OpenAI announced ChatGPT's new features, Amazon invested $4 billion in the AI startup, Anthropic. The Washington Post reported, "Just as Microsoft gained an edge over the AI competitors through its collaboration with OpenAI, Amazon, which has been criticized for lagging in the development of generative AI technology, aims to achieve a similar goal through its partnership with Anthropic." In addition, Meta, the operator of Facebook and Instagram, is accelerating the development of dozens of generative AI chatbots with different personalities. This technology is aimed at young people, who are the main users of social media. It is being developed with the hope that influencers and creators will use AI chatbots to communicate more effectively with their fans or followers. This approach of integrating AI chatbots with existing services to facilitate multimodal communication with users is expected to enhance the interaction between humans and machines, making it more beneficial and effective.

Meanwhile, there are also growing concerns about the increasingly advanced AI technologies. Experts have pointed out that while the interaction between new AI technology and users continues to evolve, the impact it will have on society remains uncertain. They argue that the process in which AI chatbots recognize large amounts of information and convey it to users as if it were real is just an "illusion." As a result, some people are concerned that technologies such as voice synthesis used in ChatGPT, could be used for cybercrimes such as Deepfake. AI researchers have also warned that users may anthropomorphize the chatbot because of its human- like responses. They argue that if users accept unverified information very easily, it could lead to unwarranted trust in the AI's capabilities.

There are also concerns about AI technology acquiring human- like voices and personalities. This is because research has shown an increased risk of inappropriate and aggressive responses of the AI when given personality traits. According to a paper published in the open archive by the Allen AI Institute, ChatGPT's risk of generating false stereotypes, harmful conversations, and misinformation increased by up to six times depending on the chosen persona. As the competition for AI chatbot technologies among big tech companies intensifies, discussions and regulations regarding privacy and ethical issues should be conducted as soon as possible.

By Park Jeong-hyeon, reporter jhgongju0903@gmail.com

<저작권자 © The Campus Journal, 무단 전재 및 재배포 금지>

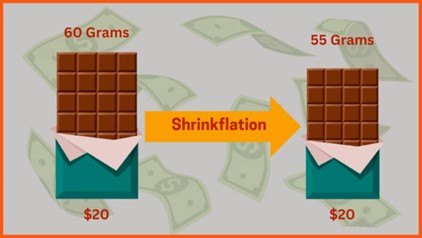

Shrinkflation, Consumer Deception

Shrinkflation, Consumer Deception