|

| ▲ From left, Elon Musk on Deferred Development, Eric Schmidt on Continuing Development (Source : Reuters, Chosun DB) |

Due to the rapidly growing ChatGPT, whether AI development will continue, has been under fire. In paper number 314, Chat GPT, which dealt with the intellectual property rights issues and possible abuse in universities. It has now been upgraded from GPT- 3.5 to GPT- 4.0 in March and re- released. GPT- 4.0 can now take information entry through images, and perform in the top 10% level in the U.S. bar- exam and the college entrance exam. The limitation is that it cannot distinguish the inputted false information, is still similar to that of the previous version. Open AI, which developed Chat GPT, evaluated that GPT- 4.0, has reached the level of undergraduate students at Stanford University.

Opinions are divided in the Silicon Valley on whether to continue AI development. In March, Future of Life Institute (FLI), a U.S. non- profit organization calling for ethical AI development, announced a public signature titled, "Pause of Giant AI Experiments Open Letter." The signature said, "A strong AI system should be developed only when we are confident that its effectiveness is positive and that we can manage risks," additionally, that we should pause the research of artificial intelligence that is more innovative than GPT- 4.0 and set up a system to control the risks of AI first. In FLI's letter, experts including Tesla CEO Elon Musk, Apple co- founder Steve Wozniak, University of Montreal's Professor Yosua Bengio, who won the, "Turing Award," which is what the Nobel Prize in IT is called, and Yuval Harari were named. Open AI did not express its position on the petition.

Opponents of the suspension of development believe that the arguments of the pros are excessive concerns. Microsoft co- founder Bill Gates said, "As it is clear that AI has clear advantages, what we need to do is to find out which part of AI is wrong," and argued that AI should be viewed as a means of solving the problem, not as a cause of the problem. Professor Andrew Ng of Stanford University and Professor Jan Lekun of New York University, who are experts in the field of AI, also argued that the development of AI should not be stopped immediately, but should pursue continuous development and find regulatory measures accordingly. Former Google CEO Eric Schmidt said, "I don't approve of a moratorium on development because it will benefit China," and also evoked that the moratorium would only give an opportunity to countries beyond U.S. influence.

Amid conflicting opinions from experts on AI development, governments around the world are struggling to establish systems suitable for AI services. The European Union earlier proposed the world's first AI regulation, also known as the, 'AI Act.' The bill, which enables the ban on certain AI services, will be introduced at a plenary session in May following the adoption of the European Parliament's position later this month. On March 31, the Italian Data Protection Agency completely blocked Chat GPT from accessing Italy, judging that it was feared to collect Italian national data without permission. The Biden administration in the U.S. has also begun reviewing regulations in consideration of the impact of the AI system on the country. On April 11, the Communications Information Administration (NTIA) under the U.S. Department of Commerce announced a plan to gather public opinion on the AI system, which will provide advice on how to approach AI and policy- making over the next 60 days. On the same day, China's Internet supervisory body, the National Cyber Information Office (CAC), also announced a draft AI service management plan that said, "Content made with AI technology must reflect China's core socialist values."

Since the launch of Chat GPT, concerns and expectations for generative AI have also been amplified as global attention has increased unprecedentedly. As a result, various efforts are being made to narrow the gap between each other, along with discussions on how much AI is threatening humanity, how much AI technology development will be regulated, and how it is practically possible to stop technological development. Attention is focused on whether such a move will be the beginning of productive regulations on AI or whether excessive regulations will hinder the commercialization and innovation of AI technology.

By Kim So-ha, cub-reporter lucky.river16@gmail.com

<저작권자 © The Campus Journal, 무단 전재 및 재배포 금지>

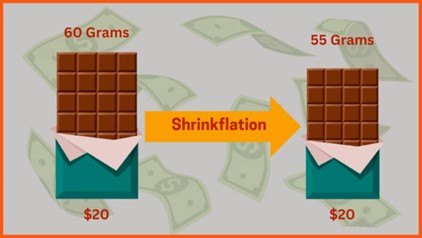

Shrinkflation, Consumer Deception

Shrinkflation, Consumer Deception